SHAP values for beginners What they mean and their applications

By A Mystery Man Writer

Description

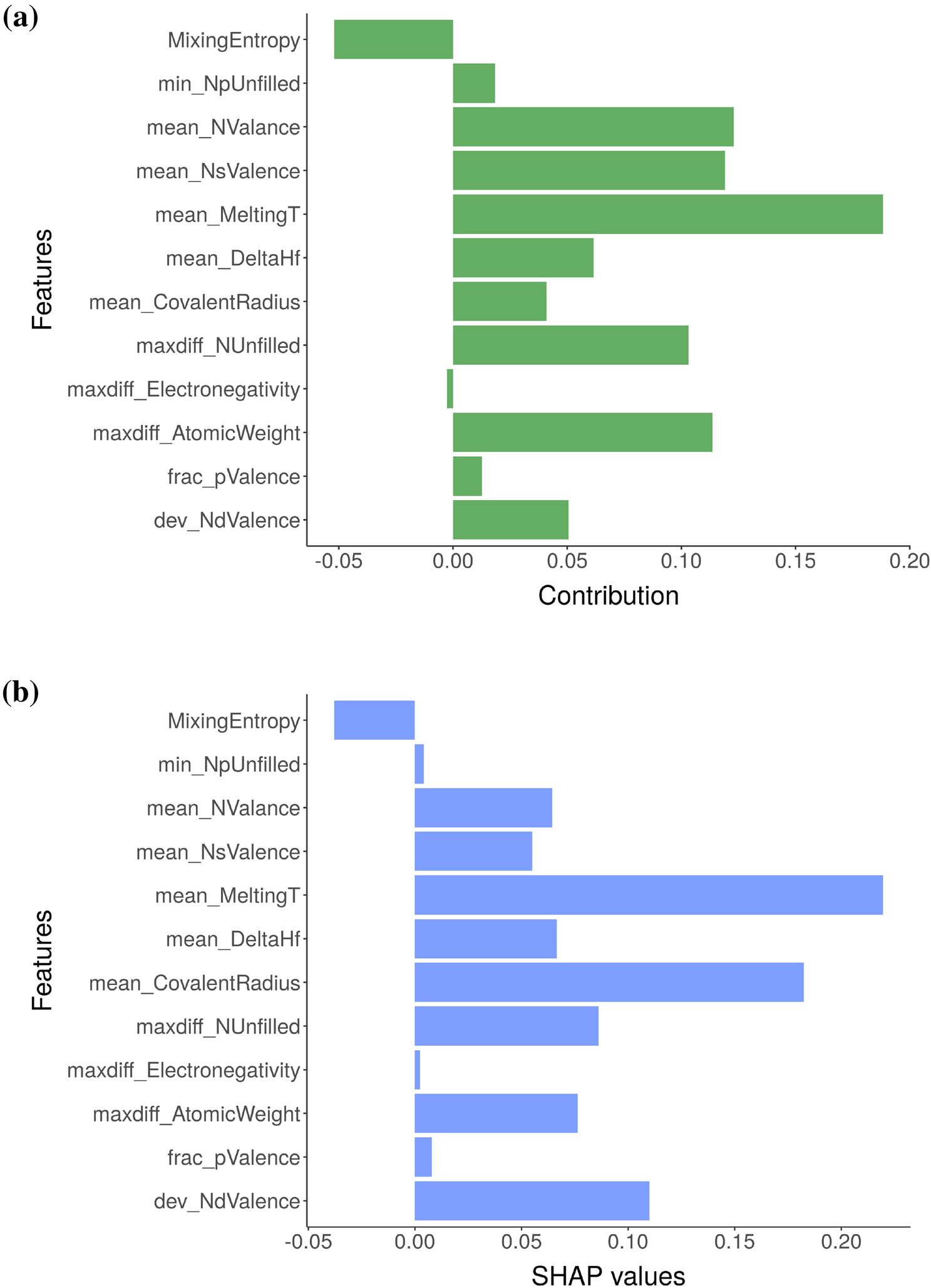

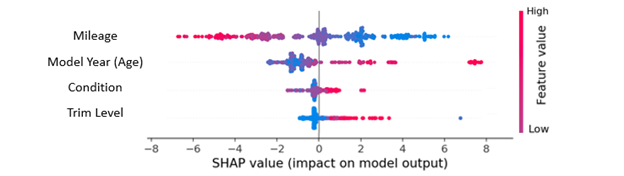

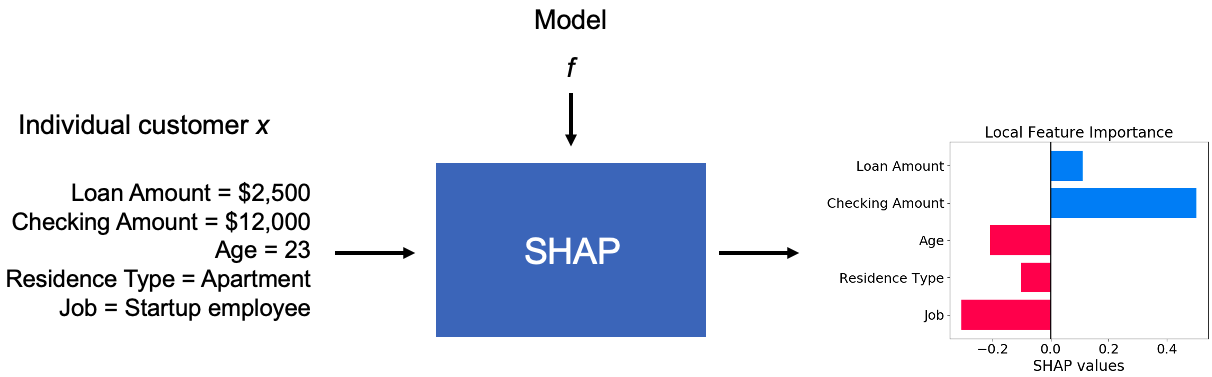

SHAP is the most powerful Python package for understanding and debugging your machine-learning models. We learn to interpret SHAP values for both continuous

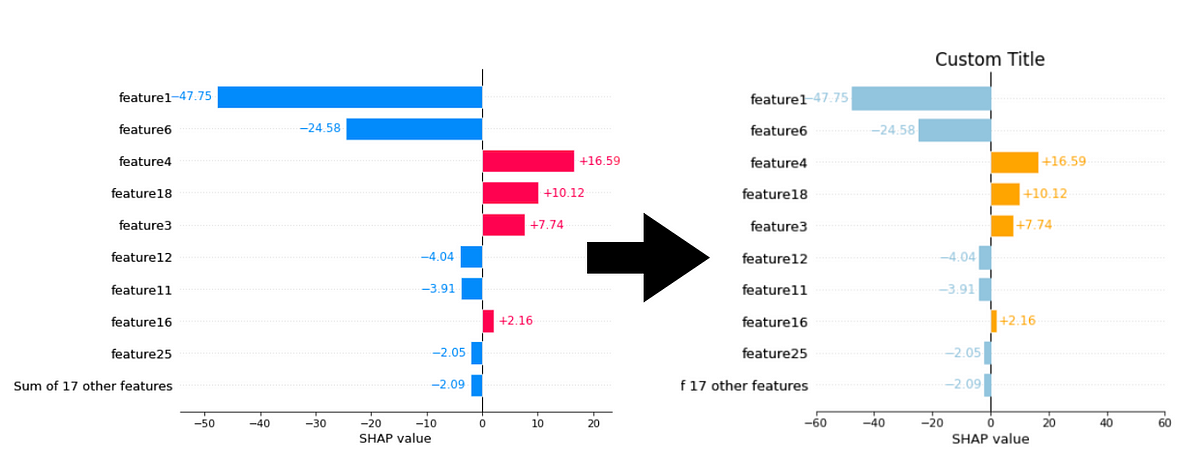

Feature importance based on SHAP-values. On the left side, the

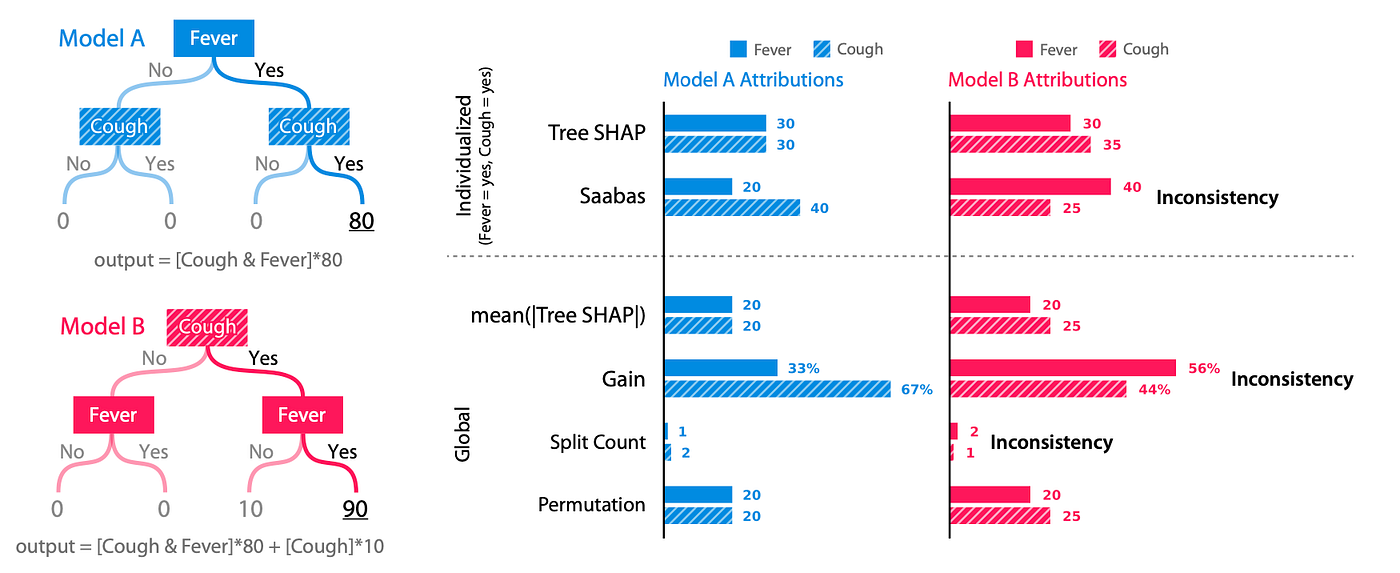

SHAP for Interpreting Tree-Based ML Models, by Jeff Marvel

GitHub - shap/shap: A game theoretic approach to explain the

Understanding machine learning with SHAP analysis - Acerta

How to interpret and explain your machine learning models using

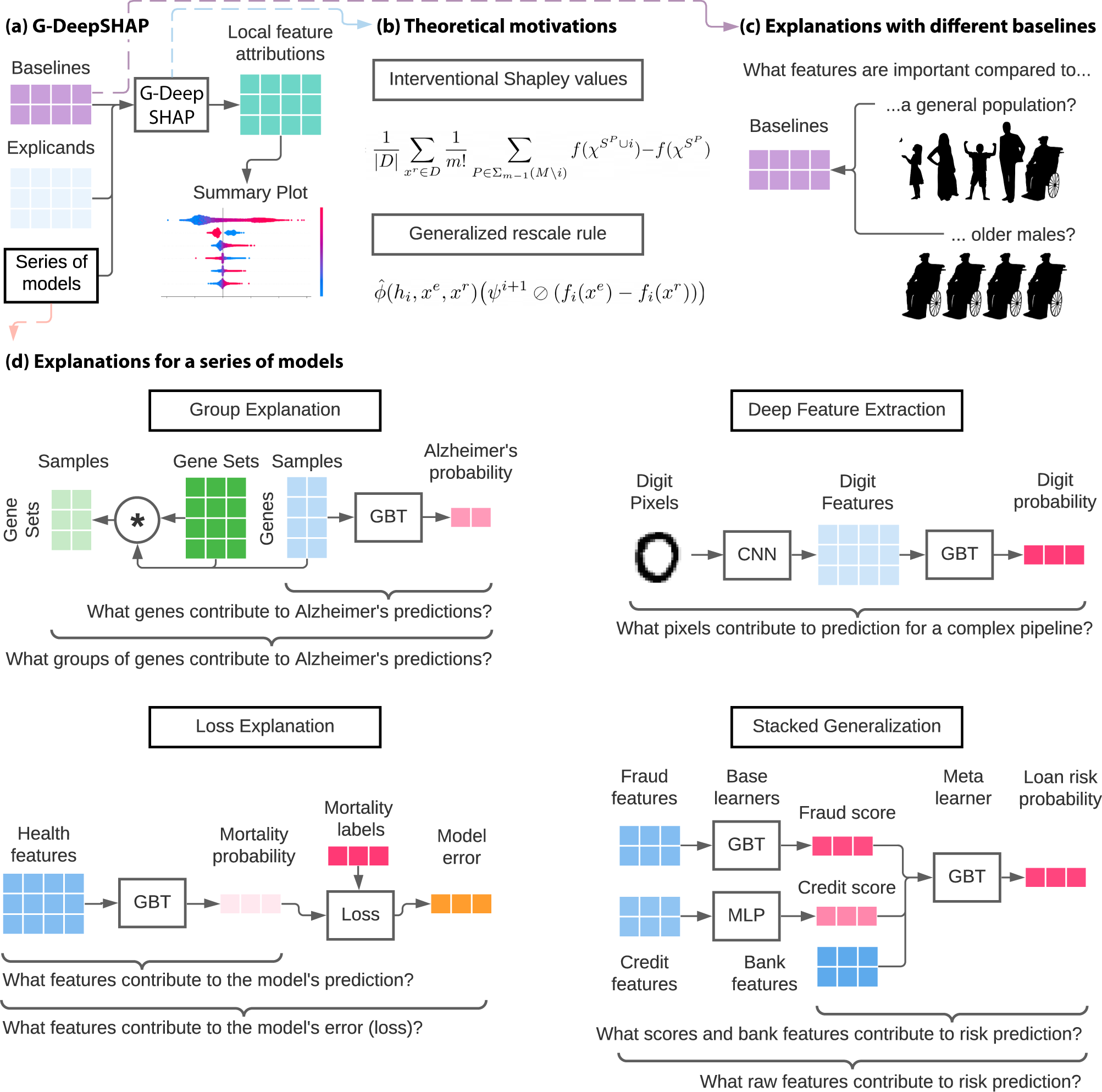

Explaining a series of models by propagating Shapley values

Shapley Value For Interpretable Machine Learning

SHAP Values: An Intersection Between Game Theory and Artificial

python - Using SHAP to explain DNN model but my summary_plot is

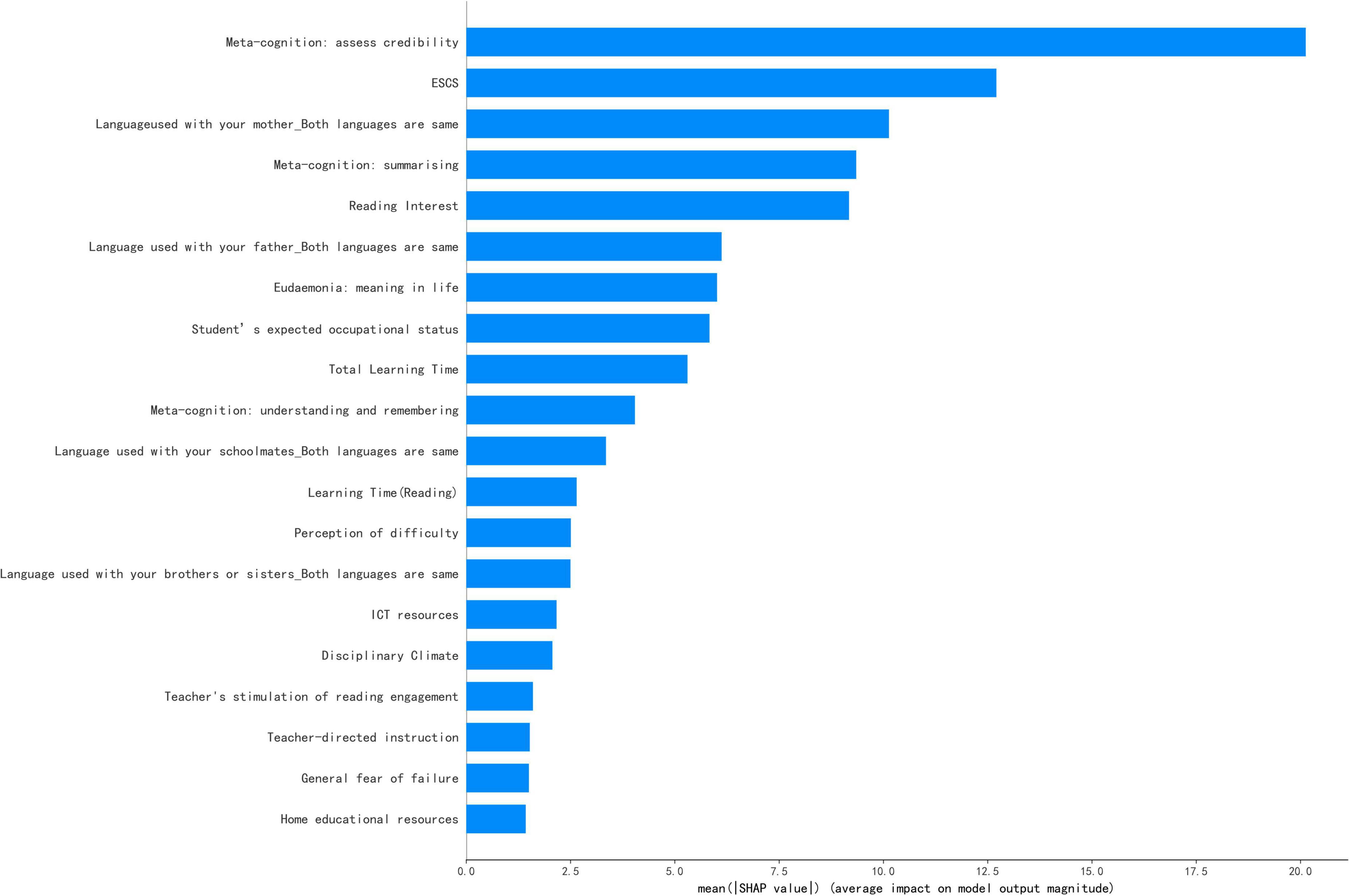

Frontiers Factors influencing secondary school students' reading

Explaining ML models with SHAP and SAGE

from

per adult (price varies by group size)