Using LangSmith to Support Fine-tuning

By A Mystery Man Writer

Description

Summary

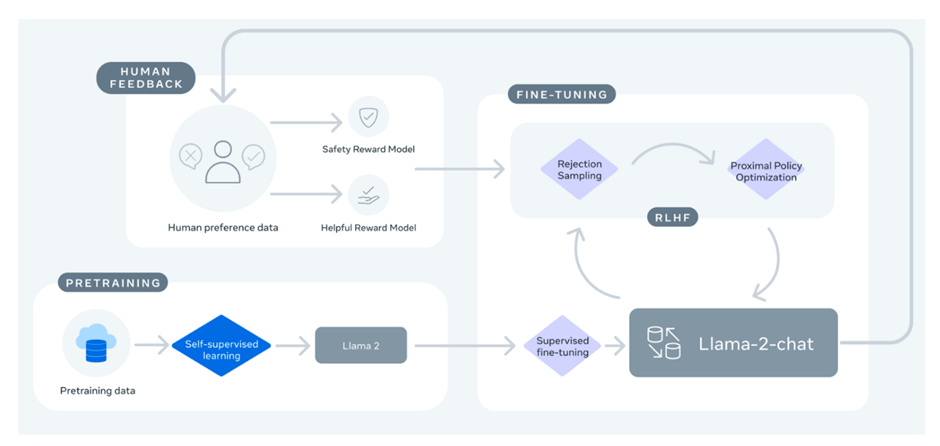

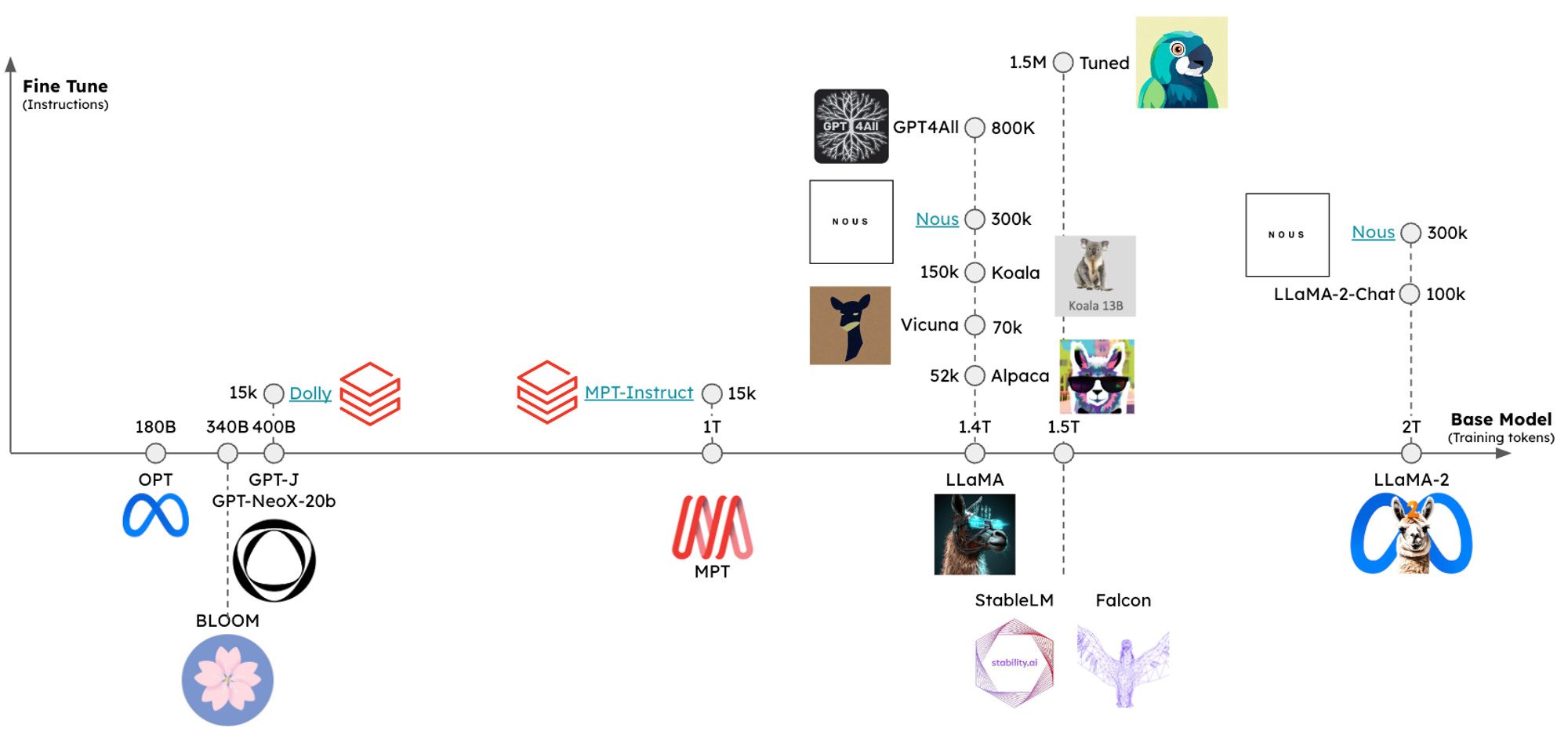

We created a guide for fine-tuning and evaluating LLMs using LangSmith for dataset management and evaluation. We did this both with an open source LLM on CoLab and HuggingFace for model training, as well as OpenAI's new finetuning service. As a test case, we fine-tuned LLaMA2-7b-chat and gpt-3.5-turbo for an extraction task (knowledge graph triple extraction) using training data exported from LangSmith and also evaluated the results using LangSmith. The CoLab guide is here.

Context

I

Nicolas A. Duerr on LinkedIn: #business #strategy #partnerships

Multi-Vector Retriever for RAG on tables, text, and images 和訳|p

Thread by @RLanceMartin on Thread Reader App – Thread Reader App

Nicolas A. Duerr on LinkedIn: #business #strategy #partnerships

Nicolas A. Duerr on LinkedIn: #futurebrains #platform #marketplace #strategy #innovation

Using LangSmith to Support Fine-tuning

Thread by @RLanceMartin on Thread Reader App – Thread Reader App

大規模言語モデルとそのソフトウェア開発に向けた応用 - Speaker Deck

Week of 8/21] LangChain Release Notes

Multi-Vector Retriever for RAG on tables, text, and images 和訳|p

LangChain(0.0.340)官方文档十一:Agents之Agent Types_langchain agenttype-CSDN博客

LangChain on X: OpenAI just made finetuning as easy an API call But there's still plenty of hard parts - top of mind are *dataset curation* and *evaluation* We shipped an end-to-end

Nicolas A. Duerr on LinkedIn: #innovation #ai #artificialintelligence #business

Thread by @LangChainAI on Thread Reader App – Thread Reader App

Using LangSmith to Support Fine-tuning

from

per adult (price varies by group size)