MPT-30B: Raising the bar for open-source foundation models

By A Mystery Man Writer

Description

Introducing MPT-30B, a new, more powerful member of our Foundation Series of open-source models, trained with an 8k context length on NVIDIA H100 Tensor Core GPUs.

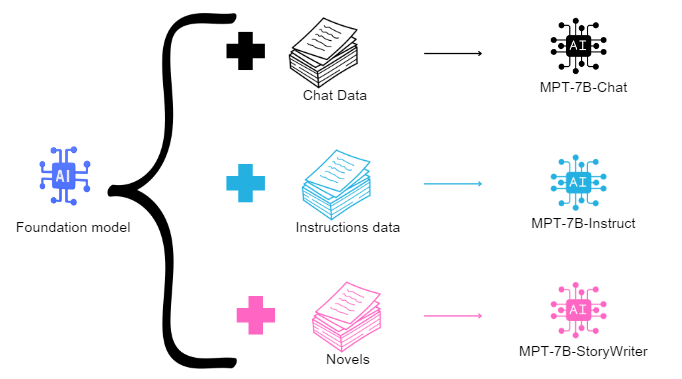

Meet MPT-7B: The Game-Changing Open-Source/Commercially Viable Foundation Model from Mosaic ML, by Sriram Parthasarathy

llm-foundry/README.md at main · mosaicml/llm-foundry · GitHub

Stardog: Customer Spotlight

Survival of the Fittest: Compact Generative AI Models Are the Future for Cost-Effective AI at Scale - Intel Community

LongLoRA: Efficient Fine-tuning of Long-Context Large Language Models

NeurIPS 2023

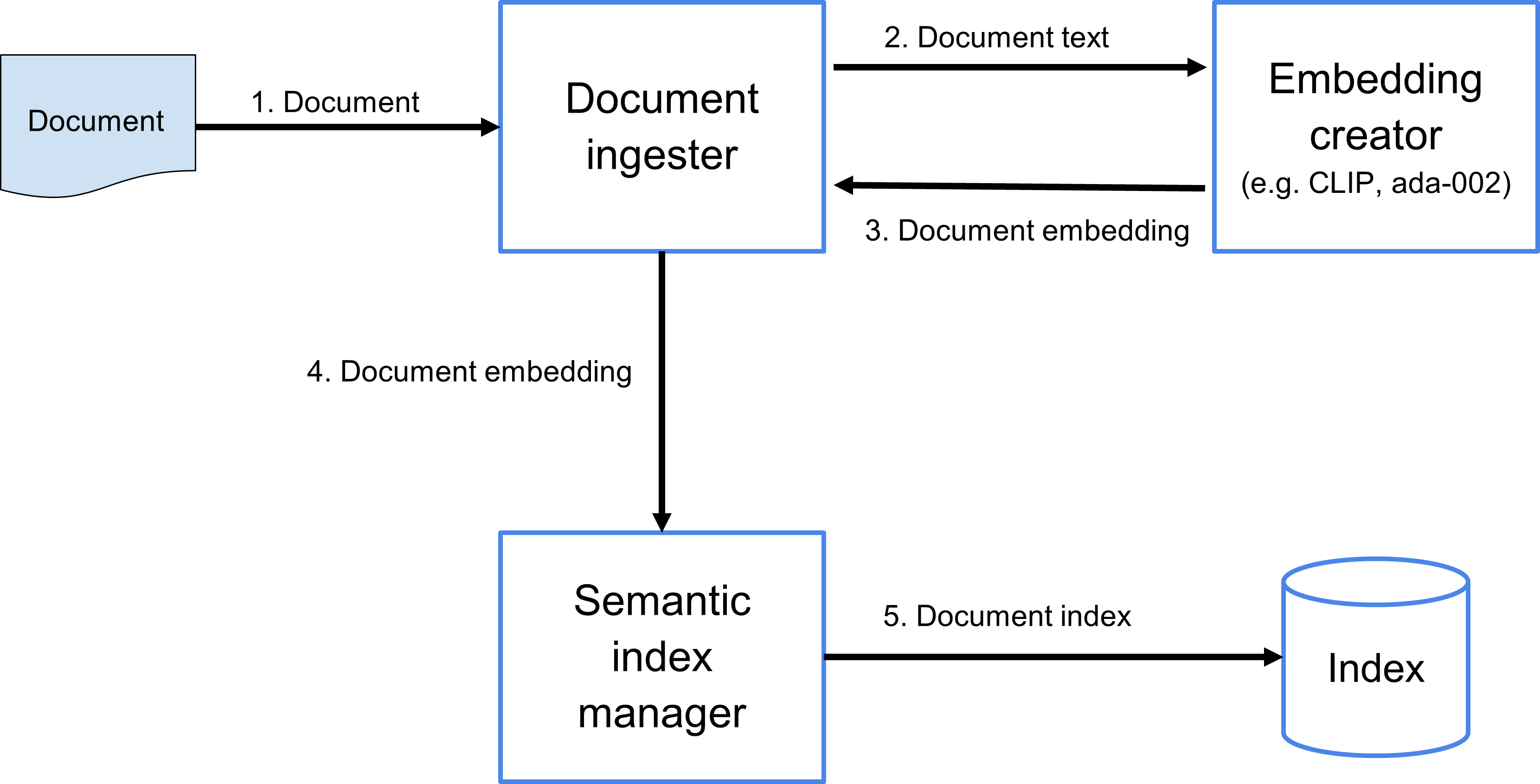

The Code4Lib Journal – Searching for Meaning Rather Than Keywords and Returning Answers Rather Than Links

Democratizing AI: MosaicML's Impact on the Open-Source LLM Movement, by Cameron R. Wolfe, Ph.D.

MosaicML Releases Open-Source MPT-30B LLMs, Trained on H100s to Power Generative AI Applications

Margaret Amori on LinkedIn: MPT-30B: Raising the bar for open-source foundation models

from

per adult (price varies by group size)