DeepSpeed: Accelerating large-scale model inference and training

By A Mystery Man Writer

Description

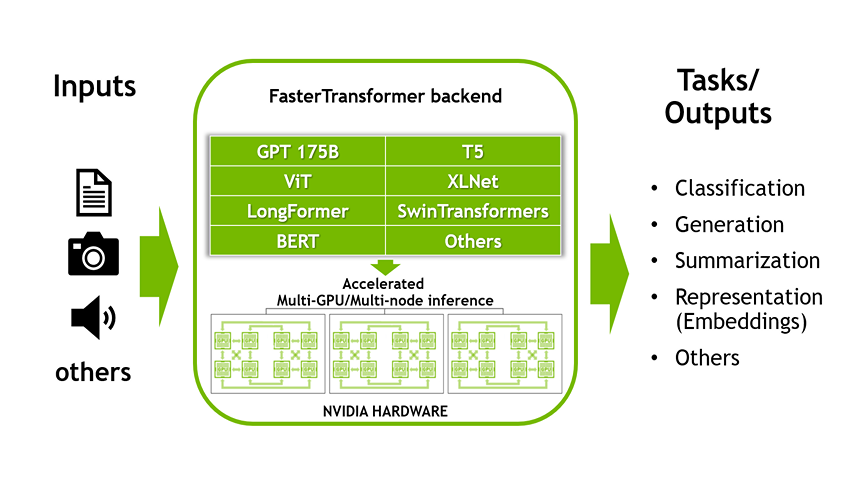

Accelerated Inference for Large Transformer Models Using NVIDIA

Is High-performance INT8 inference kernels released? · Issue

Blog - DeepSpeed

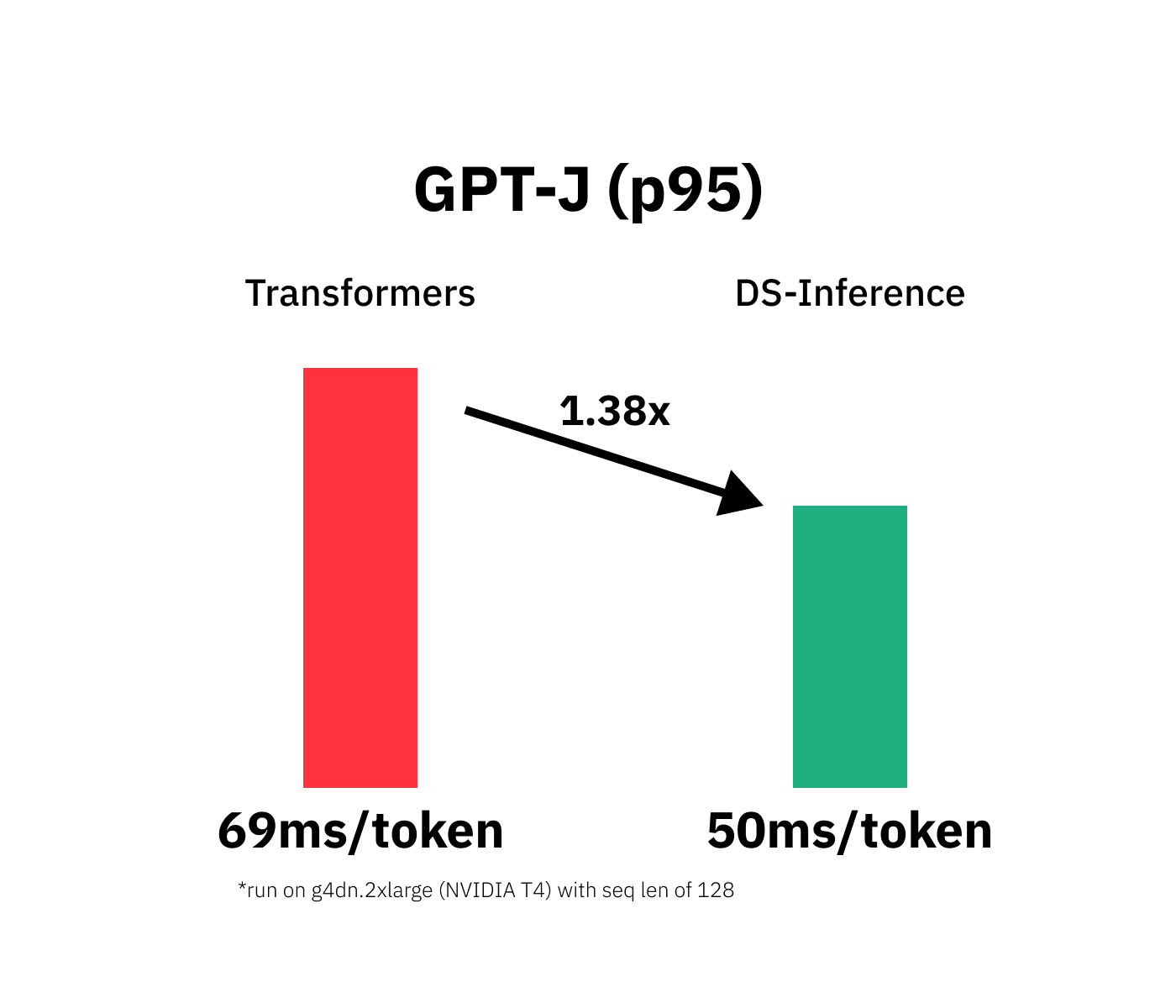

Accelerate GPT-J inference with DeepSpeed-Inference on GPUs

SW/HW Co-optimization Strategy for LLMs — Part 2 (Software)

Yuxiong He on LinkedIn: DeepSpeed: Advancing MoE inference and

N] Improvement on model's inference from DeepSpeed team. [D] How is Jax compared? : r/MachineLearning

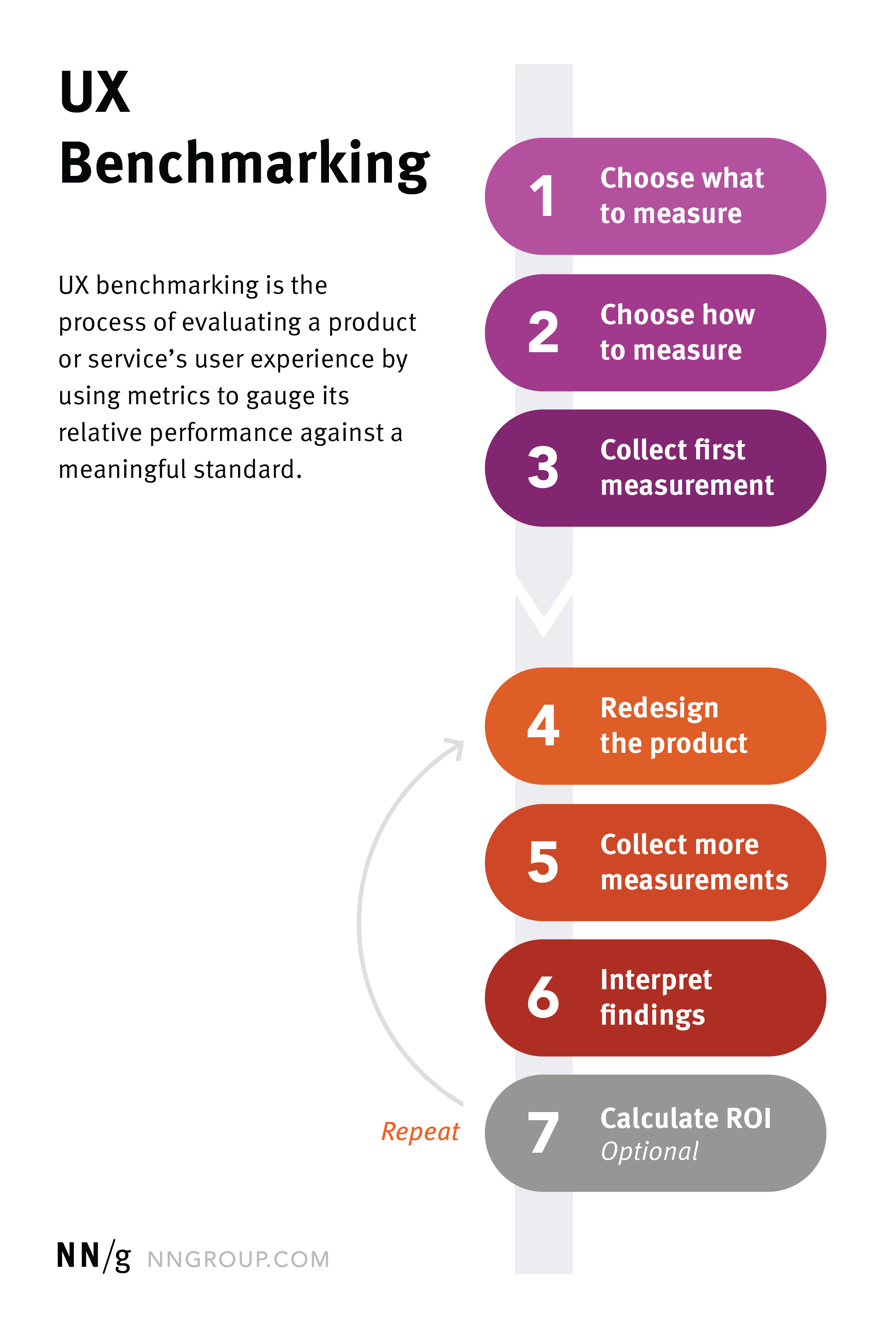

media.nngroup.com/media/editor/2020/07/31/ux-bench

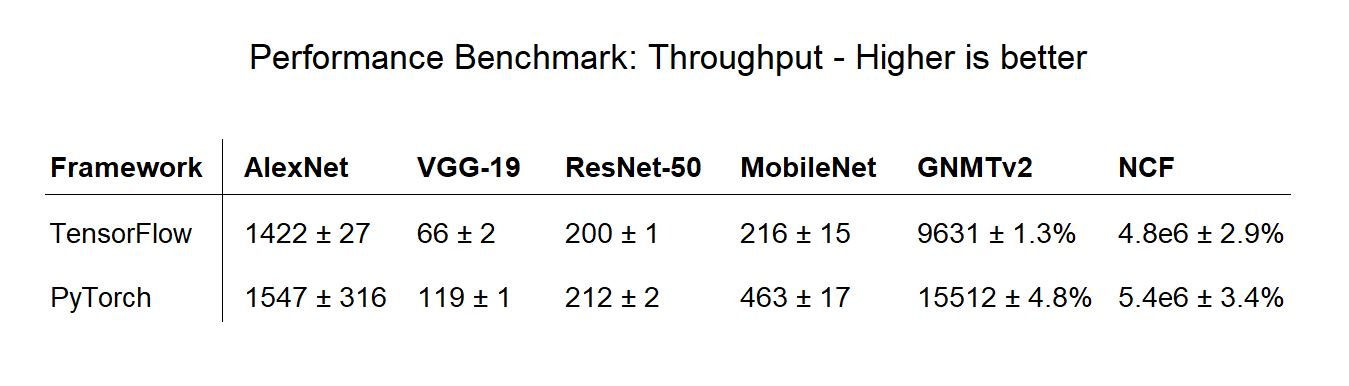

viso.ai/wp-content/uploads/2021/03/performance-ben

DeepSpeed (@MSFTDeepSpeed) / X

Yuxiong He on LinkedIn: DeepSpeed powers 8x larger MoE model

from

per adult (price varies by group size)